You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

TiFF

- Thread starter J-see

- Start date

Hi j-see

no not tried but a question I'm sure you are the one to answer it

I recently tried saving shot in Tiff from nx2 but when I go to Faststone to print (cos it's good for me )

the thumbnail says 1 of 2 and when I print it prints two , the fist very pixelated then the second perfect ?

Whats that all about , I've heard of sidecar files is this one ?

thanx J-see

no not tried but a question I'm sure you are the one to answer it

I recently tried saving shot in Tiff from nx2 but when I go to Faststone to print (cos it's good for me )

the thumbnail says 1 of 2 and when I print it prints two , the fist very pixelated then the second perfect ?

Whats that all about , I've heard of sidecar files is this one ?

thanx J-see

Hi j-see

no not tried but a question I'm sure you are the one to answer it

I recently tried saving shot in Tiff from nx2 but when I go to Faststone to print (cos it's good for me )

the thumbnail says 1 of 2 and when I print it prints two , the fist very pixelated then the second perfect ?

Whats that all about , I've heard of sidecar files is this one ?

thanx J-see

What options did you use when saving the TiFF?

TiFF can be like a collection of multiple files and some programs have issues reading those while others have no problems at all. I encounter such problems when opening my RT TiFF in Gimp.

I can't really help you since I don't know why some readers do while others don't with identical files. It could as much be some save option in NX2 as it can be a load problem with Faststone.

Maybe someone else know more.

Fred Kingston

Senior Member

There's plenty of comparisons between 8bit and 16bit Tiff... 99% of the printers don't use 16bit, and I doubt you can actually SEE the difference on any monitors made today... most software that uses TIFF will automatically convert 16bit to 8bit anyway...

There's plenty of comparisons between 8bit and 16bit Tiff... 99% of the printers don't use 16bit, and I doubt you can actually SEE the difference on any monitors made today... most software that uses TIFF will automatically convert 16bit to 8bit anyway...

That is not the same point though. Most any feasible and useful output (print or video, anything short of Lucas Labs) will be 8 bits, which is very adequate for output. But the point of 16 bit TIF input is that it has some of the advantage of 12 or 14 bit Raw (the bitwise advantage, but NOT the other Raw advantages). The purpose of more bits is only while editing (mostly helps drastic editing shifts). Then of course 8 bits output for print or video (video meaning monitors).

But 8 bit RGB is 3 bytes per pixel. For 36 megapixels, that's 108 MB files. And lossless compression is not incredibly efficient like is JPG. And camera TIF is not compressed anyway. And it's only 8 bits... the ONLY advantage of TIF over JPG is no JPG artifacts. Otherwise (if 8 bits), all the same issues. The only difference is compression, and arbitrary file format issues.

TIF has advantages, mostly of saving in-work steps losslessly (avoiding accumulating JPG artifacts). And TIF defines more format options, like CMYK color is generally used commercially, but which does not apply to camera images. And for example, our raw files are simply specialized TIF format (with new data definitions only readable by raw software).

But I can't imagine anyone using camera TIF files. 12 bit raw is vastly more feasible, 1.5 bytes per pixel, and of course all the lossless editing advantages too. If you then do want 8 or 16 bit TIF, it is an easy output from raw.

It can be said that raw software generally can edit TIF and JPG files as lossless edits. There are advantages, but of course, if you have the software, you optimally would just use raw files instead.

I'd use TiFF as output if it was 16-bit since it makes editing in third party editors quite easy. I work in TiFF constantly and only use the NEF as my start. It would eliminate one step and the need for cam and/or color profiles in RT.

But 8-bit isn't going to cut it as a start. 108Mb for a file having less information than a NEF would not be a wise decision. An uncompressed NEF is already large enough.

But 8-bit isn't going to cut it as a start. 108Mb for a file having less information than a NEF would not be a wise decision. An uncompressed NEF is already large enough.

Last edited:

Fred Kingston

Senior Member

I shoot film, and then scan... so I start in Tiff...

mountain.bayou.l

New member

Is there any advantage to just shoot in sRAW? I' man long time Nikon and Canon DSLR user who got into a D810 recently. Having been used to 500+ shoots per 32 Gb card, I was " stunned" to see only about 330 exposures. I have larger GB cards but the CF vs Sd cards have different max on my D810, hence, is sRAW a way around this? I'm not creating billboards but rather common large prints aNd CMYK FOR MAGAZINES. new to this forum; please bear with me. Thank you

Sent from my iPad using Tapatalk

Sent from my iPad using Tapatalk

Nikon does not have a sRaw format. But yes, raw is simply larger files than JPG. Carrying additional memory cards is a solution.

The D810 has.

Horoscope Fish

Senior Member

Nikon does not have a sRaw format. But yes, raw is simply larger files than JPG. Carrying additional memory cards is a solution.

Pretty sure the D4s does sRAW as well as the D810. I've always assumed it was added simply to keep up with Canon.The D810 has.

Pretty sure the D4s does sRAW as well as the D810. I've always assumed it was added simply to keep up with Canon.

Yeah the D4s and D810 both have it but it's called RAW size S, or RAW size L for normal. It's a pretty useless format since I can just as well shoot 12 bit and be done with it.

The D810 has.

Sorry, my mistake then. Actually, I did check the specs in D810 manual to be sure, before I spoke. There is no mention of Sraw, anywhere. There are size specs for 1:2, DX, Jpeg, etc, but not for Raw Small.

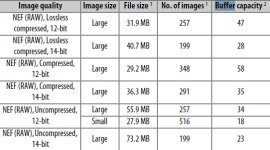

But I find it now, sort of buried on the memory card capacity chart, only as Uncompressed 12 bits - Small. Says file is 1/2 size, but buffer capacity is also only about half, 18? That seems backwards?

Best that I can tell, the manual contains no specs about its size in pixels. Small must be about same size as JPG Medium?

Sorry, my mistake then. Actually, I did check the specs in D810 manual to be sure, before I spoke. There is no mention of Sraw, anywhere. There are size specs for 1:2, DX, Jpeg, etc, but not for Raw Small.

But I find it now, sort of buried on the memory card capacity chart, only as Uncompressed 12 bits - Small. Says file is 1/2 size, but buffer capacity is also only about half, 18? That seems backwards?

Best that I can tell, the manual contains no specs about its size in pixels. Small must be about same size as JPG Medium?

It is very well hidden indeed; as if they're ashamed to have added it.

I never shot sRAW but the manual says somewhere (page 85) sRAW is about half the size of their large-size counterparts. It's not very clear what they actually imply with that.